AI Risks Don't Wait for Committees

How operational risk management turns AI governance from policy into action.

BLOG

Rick Hamilton, Naresh Nayar

2/11/20263 min read

In a previous piece, Point-of-View: AI Governance is Broken, we described a three-pillar approach to AI governance – the policies, principles, and accountability structures that define an organization’s intent. Yet across the enterprise, a familiar pattern persists: policies get written; principles are endorsed; and committees are formed. And when an AI system degrades quietly or creates unintended downstream consequences, leaders discover that governance stopped at the point of good intentions.

Imagine a demand-forecast model whose error rate drifts after a quiet upstream data change; revenue leakage accumulates for weeks before anyone can prove where the shift began. The postmortem is not about ‘AI ethics’ in the abstract, but rather, it is about telemetry, ownership, and escalation. The reality is that AI risk doesn’t live in policy documents. Instead, risk emerges through day-to-day decisions, unexpected system behavior, and operational tradeoffs, the very areas where AI risk management matters most.

In a mature AI program, governance sets direction and intent, while operational risk management determines how those intentions translate into real outcomes. Because risk manifests unevenly, not all AI systems require the same level of operational rigor. Controls must scale with business impact, ensuring speed for low-risk experimentation while demanding stronger discipline for systems that influence customers, critical decisions, or regulated outcomes.

Governance Sets the “What” and the “Why”

An enterprise’s governance framework establishes the organization’s intent for AI use. This framework answers foundational questions such as:

What risks will we tolerate, and in which contexts?

What ethical and legal boundaries constrain us?

Which use cases advance strategic priorities?

Who decides, and who is accountable?

For governance to be effective, accountability must sit with the business. Every AI system with meaningful impact on customers, clinical or financial outcomes, regulatory posture, or brand trust should have a named business executive as the accountable risk owner – someone with authority over the system’s real-world consequences. Technology and risk teams enable controls and provide independent challenges, but they do not own the outcomes.

In our three-pillar governance framework, these decisions are shaped through distributed accountability:

First-line employees who surface ground truth from real AI use

A cross-functional oversight body that aligns risk with enterprise priorities

An independent audit function empowered to challenge assumptions

Together, these pillars define decision rights, escalation paths, and acceptable risk.

Operational Risk Management: the “How” and the “Who”

Operational AI risk management is where governance intent turns into daily practice. This framework, well-implemented, is responsible for identifying and mitigating risk as systems evolve; implementing controls and guardrails; monitoring performance for drift and anomalies; and responding to incidents with containment and learning.

This layer is where accountability becomes tangible. When something goes wrong, operational risk management determines whether the organization detects the issue early, responds effectively, and learns from the event. Without this layer, governance remains theoretical.

A Complementary View of AI Risk

AI risk is often described through categorical frameworks, such as the NIST AI Risk Management Framework, which organize risk into areas like data integrity, model validation, security, fairness, and monitoring. These taxonomies are useful, because they help organizations enumerate the kinds of risks that may exist across the AI lifecycle.

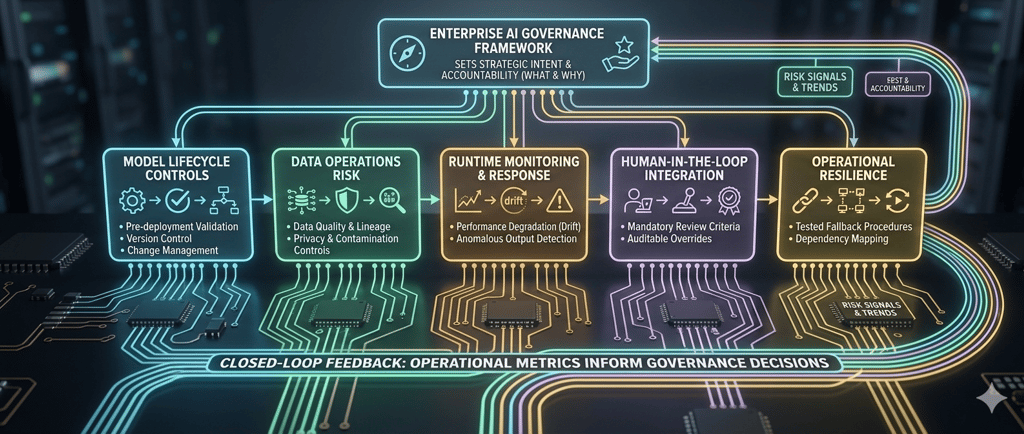

Our framework does not dispute this view, but it shifts the axis of organization. In addition to classifying risk by type, we organize it by how risk is managed inside an enterprise: lifecycle controls, runtime monitoring, data operations, human oversight, and operational resilience. These domains map directly to ownership boundaries, escalation paths, and day-to-day decision-making.

This distinction, moving from abstract categories to operating domains, matters for two reasons. First, it enables proportionate rigor. Not every AI application requires the same level of oversight; an internal meeting summarization tool does not demand the same lifecycle controls as a customer-facing diagnostic system. By organizing risk into operational domains, leadership can establish “fast-track” pathways for low-stakes experimentation while applying deep technical discipline on high-impact systems. This approach prevents governance from becoming a uniform bottleneck, instead ensuring that oversight resources are allocated where they most effectively protect the enterprise.

Second, this approach clarifies accountability. Risk categories describe what can go wrong. Operating domains determine who is accountable, when signals surface, and how governance adapts based on real system behavior. In practice, AI failures rarely occur because a risk category was misunderstood. Failures occur because signals were missed, ownership was unclear, or feedback never reached someone empowered to act.

This framing does not create a new governance program. It embeds AI risk into the organization’s existing risk machinery: enterprise risk management, security incident response, compliance, audit, and vendor oversight. The question is not whether AI controls exist, but whether AI failures move through the same escalation, accountability, and resolution pathways as other material risks.

When AI governance is treated as an overlay, signals fragment and accountability is diffused. When it is embedded into the enterprise operating model, risk becomes visible, escalation becomes routine, and learning becomes systematic. This makes AI governance executable in practice: complementing established standards while focusing attention on the mechanisms that turn policy into action and principles into sustained performance.

Together, we explore this important topic more thoroughly in the full Substack article, including the five domains of operational AI risk management: Model Lifecycle Controls, Data Operations Risk, Runtime Monitoring and Response, Human-in-the-Loop Integration, and Operational Resilience. Read the full article here.